While accidental cameras [17, 19] are just that: accidental, several papers covered some hardware implementations [1,8,9,13]. I liked the fact that we are getting closer to the sensing elements as this is truly how radical new hardware will eventually prevail. It should be noted that several algorithm implementations were made available by researchers [5,6,12,25] and using a new tensor decomposition [6] we may have something that goes as fast as sFFT [7]. This maybe a new route for other endeavors in compressive sensing as this tensor decomposition is SVD based and could potentially use some of the Robust PCA results. This is of note because many of the development performed in compressive sensing from 2D images, phase retrieval data, 3D view or 2D + hyperspectral data to 5D data such as found in plenoptic cameras revolve around some unacknowledged tensor decomposition. The typical beginner's question on how to perform compressed sensing on an image is a witness to that unacknowledged issue (you need to reshape a 2D image into a 1D vector).

The other idea that is driving people's thoughts these days is the need to go beyond the concept of sparsity [2,3,21] and use prior information like complexity or else. [3]. We need to know what constraints and priors will make both CS and Machine Learning meet [3]. But there is also some urgency as Rich reminds us [15] in that "We generated more data than there are stars in the Universe" [14]. Let's put some context here, this month saw some new benchtop sequencers shipping off. With the devices we should expect sequence genome in under a day. Still no word on Oxford Nanopore but if you recall their announcement back in February as Nick Loman reported (their new technology goes through DNA one piece at a time i.e. no need to cut it off in several smaller parts like other technology)

....For actual base-reads, ONT still has not achieved single-base sensitivity (though Brown did mention they are working on it). Instead they are reading three bases at a time, leading to 64 different current levels. They then apply the Viterbi algorithm – a probabilistic tool that can determine hidden states – to these levels to make base calls at each position.

In the same post, one can read that they have an 96% reading accuracy which is very low in that business and the reason probably they haven't gotten a machine out yet, but they are hiring people who can make sense of their data. All this to say that soon enough, the actual counting of stars won't mean much compared to what we are about to produce. It will also radically change healthcare. Sequencing and sensing as provided by coarser devices like the one mentioned at the very beginning of this post are in fact complementary.

In the realm of under-appreciated problems, I mentioned the Reddit Database [22]. I need to come back to that later.

This month, we also had a lot slew of interesting papers [16]. Finally, we discussed the business of science stretching from peer review to reproducible research in several entries [20,22, 24,26, 18]. There were also two workshop [10, 13] and two job announcements [11,23]

- Passive millimeter-wave imaging with compressive sensing

- Compressed Sensing of EEG for Wireless Telemonitoring with Low Energy Consumption and Inexpensive Hardware

- From Compressive Sensing to Machine Learning

- Alternating Direction Methods for Classical and Ptychographic Phase Retrieval

- High-accuracy wave field reconstruction using Sparse Regularization - implementations -

- Sunday Morning Insight: QTT format and the TT-Toolbox -implementation-

- Another Super Fast FFT

- Compressed Sensing for the multiplexing of PET detectors

- A single-photon sampling architecture for solid-state imaging

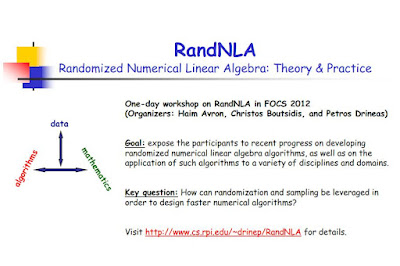

- Workshop on "Randomized Numerical Linear Algebra (RandNLA): Theory and Practice"

- PostDoc: Research Fellow in Signal Processing for Radio Astronomy

- Implementation: Iterative Reweighted Algorithms for Matrix Rank Minimization

- Nuit Blanche's Mailbag: Two-day workshop on Sparse Models and Machine Learning / Aptina and a Multi-Array Gigapixel camera

- "We generated more data than there are stars in the Universe"

- Richard Baraniuk's recent videos on Compressive Sensing

- Compressive Sensing and Advanced Matrix Factorization This Month

- The Accidental Single Pixel Camera

- Around the Blogs in 80 Summer Hours

- The accidental multi-aperture camera

- Mathematical Instruments: Nuit Blanche

- Beyond Sparsity: Minimum Complexity Pursuit for Universal Compressed Sensing

- Post Peer-Review Discussion continues and a remarkable dataset

- Post-doctoral position at CEA Saclay in image processing for multispectral data

- The most important discussion about peer-review we're not having ... until today. -updated-

- Implementation: Bilinear modelling via Augmented Lagrange Multipliers (BALM)

- A Year in Reproducible Research in Compressive Sensing, Advanced Matrix Factorization and more

Image Credit: NASA/JPL-Caltech/Malin Space Science Systems

This image was taken by Mastcam: Left (MAST_LEFT) onboard NASA's Mars rover Curiosity on Sol 52 (2012-09-28 15:29:26 UTC)

Full Resolution