Since the last Nuit Blanche in Review (April 2017), we have had to wonder about The Desperate Researchers of Computer Vision. We also featured, five implementations, several in-depth articles, three theses, two Highly Technical Reference Pages, some ML Hardware, a few Machine Learning videos (and two Paris Machine Learning meetups). and more...Enjoy !

Implementations

In-depth

Thesis

Highly Technical Reference Page

- Concrete Dropout

- A Semismooth Newton Method for Fast, Generic Convex Programming

- DEvol - Automated deep neural network design with genetic programming

- DeepArchitect: Automatically Designing and Training Deep Architectures

- Spectral Ergodicity in Deep Learning Architectures via Surrogate Random Matrices

- Relaxed Wasserstein with Applications to GANs

- The High-Dimensional Geometry of Binary Neural Networks

- Global Guarantees for Enforcing Deep Generative Priors by Empirical Risk

- Short and Deep: Sketching and Neural Networks

- Soft Recovery With General Atomic Norms

- Guaranteed recovery of quantum processes from few measurements / Improving compressed sensing with the diamond norm

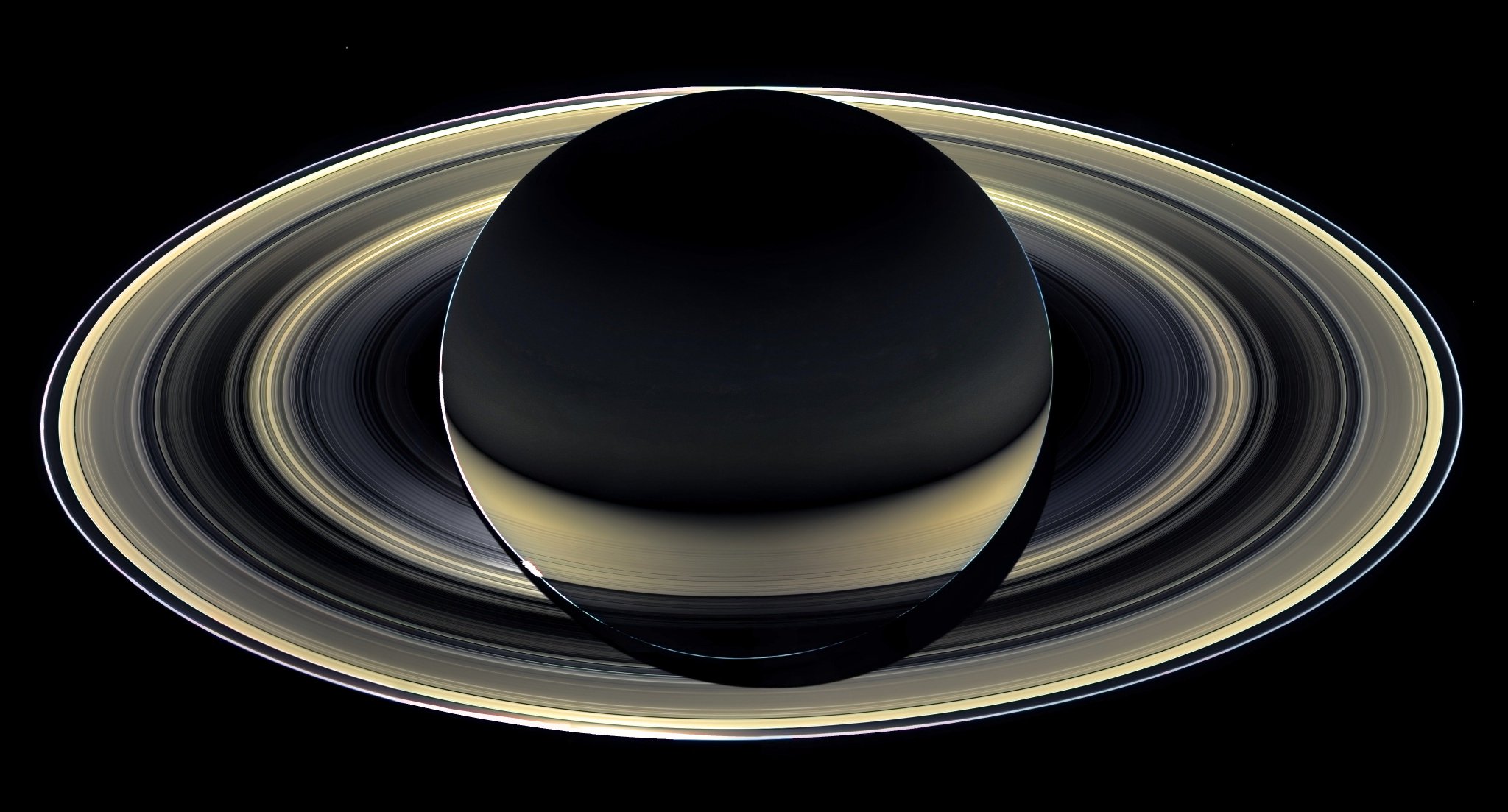

- Speckle-based hyperspectral imaging combining multiple scattering and compressive sensing in nanowire mats

- Thesis (Honors): Improved Genomic Selection using VowpalWabbit with Random Fourier Features, Jiaqin Jaslyn Zhang

- Thesis: Robust Low-rank and Sparse Decomposition for Moving Object Detection: From Matrices to Tensors by Andrews Cordolino Sobral

- Thesis: Fast Algorithms on Random Matrices and Structured Matrices by Liang Zhao

ML Hardware

Jobs:

Paris Machine Learning

ML video

Video

Join the CompressiveSensing subreddit or the Google+ Community or the Facebook page and post there !

Liked this entry ? subscribe to Nuit Blanche's feed, there's more where that came from. You can also subscribe to Nuit Blanche by Email, explore the Big Picture in Compressive Sensing or the Matrix Factorization Jungle and join the conversations on compressive sensing, advanced matrix factorization and calibration issues on Linkedin.

Liked this entry ? subscribe to Nuit Blanche's feed, there's more where that came from. You can also subscribe to Nuit Blanche by Email, explore the Big Picture in Compressive Sensing or the Matrix Factorization Jungle and join the conversations on compressive sensing, advanced matrix factorization and calibration issues on Linkedin.

- Video: "Can the brain do back-propagation?" Goeff Hinton

- Sunday Morning Videos: Deep Learning and Artificial Intelligence symposium at NAS 154th Annual Meeting

- Saturday Morning Videos: Computational Challenges in Machine Learning, Simons Institute, May 1 – May 5, 2017

Video