. Kudos to

for making them available before the meeting. It looks like Saint-Malo is going to be the place to be April 6th thru 9th. Enjoy the reading over the week-end or for some of you on the 10 hours flight to France.

Contextual Image Compression from Adaptive Sparse Data Representations by Laurent Demaret, Armin Iske, Wahid Khachabi. The abstract reads:

Natural images contain crucial information in sharp geometrical boundaries between objects. Therefore, their description by smooth isotropic function spaces (e.g. Sobolev or Besov spaces) is not sufficiently accurate. Moreover, methods known to be optimal for such isotropic spaces (tensor product wavelet decompositions) do not provide optimal nonlinear approximations for piecewise smooth bivariate

functions. Among the geometrybased alternatives that were proposed during the last few years, adaptive thinning methods work with continuous piecewise affine functions on anisotropic triangulations to construct sparse representations for piecewise smooth bivariate functions. In this article, a customized compression method for coding the sparse data information, as output by adaptive thinning, is proposed. The compression method is based on contextual encoding of both the sparse data positions and their attached luminance values. To this end, the structural properties of the sparse data representation are essentially exploited. The resulting contextual image compression method of this article outperforms our previous methods (all relying on adaptive thinning) quite significantly. Moreover, our proposed compression method also outperforms JPEG2000 for selected natural images, at both low and middle bitrates, as this is supported by numerical examples in this article.

Compressive Domain Interference Cancellation by Mark A. Davenport, Petros T. Boufounos, Richard Baraniuk. The abstract reads:

In this paper we consider the scenario where a compressive sensing system acquires a signal of interest corrupted by an interfering signal. Under mild sparsity and orthogonality conditions on the signal and interference, we demonstrate that it is possible to efficiently filter out the interference from the compressive measurements in a manner that preserves our ability to recover the signal of interest. Specifically, we develop a filtering method that nulls out the interference while maintaining the restricted isometry property (RIP) on the set of potential signals of interest. The construction operates completely in the compressive domain and has computational complexity that is polynomial in the number of measurements.

Fast Algorithm for Sparse Signal Approximation using Multiple Additive Dictionaries by Ray Maleh, Daehyun Yoon, Anna C. Gilbert. The abstract reads:

There are several models for sparse approximation: one where a signal is a sparse linear combination of vectors over a redundant dictionary and a second model in which a collection of signals is a simultaneous sparse linear combination over a single dictionary. In this work, interpolate between these two models to synthesize a single signal of interest from K highly incoherent dictionaries while enforcing simultaneous sparsity on the K resulting coefficient vectors. We define this as the parallel approximation problem, which arises quite naturally in many applications such as MRI parallel excitation using multiple transmission coils. We present an efficient algorithm to solve the parallel approximation problem called Parallel Orthogonal Matching Pursuit (POMP). We prove its correctness in a general setting and then discuss adaptations needed to make it suitable for use in an MRI parallel excitation setting. We then discuss parallel excitation in more detail and demonstrate how POMP solves the problem as accurately, but much faster, than previously proposed convex optimization methods.

An upper bound on the estimation error of the sparsest solution of underdetermined linear systems by Massoud Babaie-Zadeh, G. Hosein Mohimani, Christian Jutten. The abstract reads:

Let A be an n X m matrix with m \gt n, and suppose the underdetermined linear system As = x admits a unique sparse solution s0 (i.e. it has a solution s0 for which ks0k0 \lt 1 2 spark(A)). Suppose that we have somehow a solution (sparse or non-sparse) ^s of this system as an estimation of the true sparsest solution s0. Is it possible to construct an upper bound on the estimation error k^s - s0k2 without knowing s0? The answer is positive, and in this paper we construct such a bound which, in the case A has Unique Representation Property (URP), depends on the smallest singular value of all n X n submatrices of A.

Algorithms for Multiple Basis Pursuit Denoising by Alain Rakotomamonjy. The abstract reads:

We address the problem of learning a joint sparse approximation of several signals over a dictionary. We pose the problem as a matrix approximation problem with a row-sparsity inducing penalization on the coefficient matrix. We propose a simple algorithm based on iterative shrinking for solving the problem. At the present time, such a problem is solved either by using a Second-Order Cone programming or by means of a MFocuss algorithm. While the former algorithm is computationally expensive, the latter is efficient but present some pitfalls like presences of fixed points which are undesiderable when solving a convex problem. By analyzing the optimality conditions of the problem, we derive a simple algorithm. The algorithm we propose is efficient and is guaranteed to converge to the optimal solution, up to a given tolerance. Furthermore, by means of a reweighted scheme, we are able to improve the sparsity of the solution.

The Two Stage l1 Approach to the Compressed Sensing Problem by Stéphane Chrétien. The abstract reads:

This paper gives new results on the recovery of sparse signals using l1-norm minimization. We introduce a twostage l1 algorithm equivalent to the first two iterations of the alternating l1 relaxation introduced in [1]

Local orthogonal greedy pursuits for scalable sparse approximation of large signals with shift-invariant dictionaries by Boris Mailhé, Rémi Gribonval, Frédéric Bimbot, Pierre Vandergheynst. The abstract reads:

We propose a way to increase the speed of greedy pursuit algorithms for scalable sparse signal approximation. It is designed for dictionaries with localized atoms, such as timefrequency dictionaries. When applied to OMP, our modification leads to an approximation as good as OMP while keeping the computation time close to MP. Numerical experiments with a large audio signal show that, compared to OMP and Gradient Pursuit, the proposed algorithm runs in over 500 less time while leaving the approximation error almost unchanged.

A Shift Tolerant Dictionary Training Method by B. Vikrham Gowreesunker 1, Ahmed H. Tewfik. The abstract reads:

Traditional dictionary learning method work by vectorizing long signals, and training on the frames of the data, thereby restricting the learning to time-localized atoms. We study a shift-tolerant approach to learning dictionaries, whereby the features are learned by training on shifted versions of the signal of interest. We propose an optimized Subspace Clustering learning method to accommodate the larger training set for shift-tolerant training. We illustrate up to 50% improvement in sparsity on training data for the Subspace Clustering method, and the KSVD method [1] with only a few integer shifts. We demonstrate improvement in sparsity for data outside the training set, and show that the improved sparsity translates into improved source separation of instantaneous audio mixtures.

General Perturbations in Compressed Sensing by Matthew A. Herman, Thomas Strohmer. The abstract reads:

We analyze the Basis Pursuit recovery of signals when observing K-sparse data with general perturbations (i.e., additive, as well as multiplicative noise). This completely perturbed model extends the previous work of Cand`es, Romberg and Tao on stable signal recovery from incomplete and inaccurate measurements. Our results show that, under suitable conditions, the stability of the recovered signal is limited by the noise level in the observation. Moreover, this accuracy is within a constant multiple of the best-case reconstruction using the technique of least squares.

Dictionary learning with spatio-spectral sparsity constraints by Yassir Moudden, Jérome Bobin, Jean-Luc Starck, Jalal Fadili. The abstract reads:

Devising efficient sparse decomposition algorithms in large redundant dictionaries has attracted much attention recently. However, choosing the right dictionary for a given data set remains an issue. An interesting approach is to learn the best dictionary from the data itself. The purpose of this contribution is to describe a new dictionary learning algorithm for multichannel data analysis purposes under specific assumptions. We assume a large number of contiguous channels as in so-called hyperspectral data. In this case it makes sense to consider a priori that the collected data exhibits sparse spectral signatures and sparse spatial morphologies in specified dictionaries of spectral and spatial waveforms. Building on GMCA, the proposed algorithm gives a practical way to enforce the additional a priori spectral sparsity constraint on the dictionary space. Numerical experiments with synthetic and real hyperspectral data illustrate the efficiency of the proposed algorithm.

Compressive Sensing Recovery of Spike Trains Using A Structured Sparsity Model by Chinmay Hegde, Marco F. Duarte , Volkan Cevher. The abstract reads:

The theory of Compressive Sensing (CS) exploits a well-known concept used in signal compression - sparsity - to design new, efficient techniques for signal acquisition. CS theory states that for a length-N signal x with sparsity level K, M = O(K log(N/K)) random linear projections of x are sufficient to robustly recover x in polynomial time. However, richer models are often applicable in real-world settings that impose additional structure on the sparse nonzero coefficients of x.Many such models can be succinctly described as a union of K-dimensional subspaces. In recent work, we have developed a general approach for the design and analysis of robust, efficient CS recovery algorithms that exploit such signal models with structured sparsity. We apply our framework to a new signal model which is motivated by neuronal spike trains. We model the firing process of a single Poisson neuron with absolute refractoriness using a union of subspaces. We then derive a bound on the number of random projections M needed for stable embedding of this signal model, and develop a algorithm that provably recovers any neuronal spike train from M measurements. Numerical experimental results demonstrate the benefits of our model-based approach compared to conventional CS recovery techniques.

Reconstruction of Undersampled Cardiac Cine MRI data Using Compressive Sensing Principles by Pooria Zamani, Hamid Soltanian-Zadeh. The abstract reads:

Reduction of MRI data acquisition time is an important goal in the MRI field. Undersampling k-t space is a solution to reduce acquisition time. MR images may have sparse or compressible presentations in appropriate transform domains, such as wavelets. According to the Compressive Sensing (CS) theory, they can be recovered from randomly undersampled k-t space data that guarantees incoherency between sparsifying transform and sampling operator. However, pure random k-space sampling can be more time-consuming than full k-space sampling because of MRI principles. In this paper, we propose a new method based on hidden Markov models (HMM) to undersample k-space along the phase-encoding direction. To this end, we cluster extracted features of each k-space line by fuzzy c-means (FCM) method and consider the resulting class labels as the states of a Markov chain. Then we train a HMM and find the related transition matrix to each k-space line. We choose the lines having more non-diagonal transition matrices to sample data along them. We reconstruct the image by minimizing the L1 norm subject to data consistency using conjugate-gradient method and use simulation to set the parameters of the proposed method (e.g., iteration number). We apply our method to reconstruct undersampled Cardiac Cine MRI data with and without sparsifying transform, successfully. The use of fuzzy clustering as an intermediate tool to study complicated phenomena by HMM, applicability to non-dynamic MRI data and simplicity can be accounted as the specifications of the proposed method.

Greedy Deconvolution of Point-like Objects by Dirk A. Lorenz, Dennis Trede. The abstract reads:

The orthogonal matching pursuit (OMP) is an algorithm to solve sparse approximation problems. In [1] a sufficient condition for exact recovery is derived, in [2] the authors transfer it to noisy signals. We will use OMP for reconstruction of an inverse problem, namely the deconvolution problem. In sparse approximation problems one often has to deal with the problem of redundancy of a dictionary, i.e. the atoms are not linearly independent. However, one expects them to be approximatively orthogonal and this is quantified by incoherence. This idea cannot be transfered to ill-posed inverse problems since here the atoms are typically far from orthogonal: The illposedness of the (typically compact) operator causes that the correlation of two distinct atoms probably gets huge, i.e. that two atoms can look much alike. Therefore in [3], [4] the authors derive a recovery condition which uses the kind of structure one assumes on the signal and works without the concept of coherence. In this paper we will transfer these results to noisy signals. For our source we assume that it consists of a superposition of point-like objects with an a-priori known distance. We will apply it exemplarily to Dirac peaks convolved with Gaussian kernel as used in mass spectrometry.

Compressed sensing based compression of SAR raw data by Gabriel Rilling, Mike Davies, Bernard Mulgrew. The abstract reads:

Due to their noise-like features, SAR images are difficult to acquire with compressed sensing techniques. However, some parts of the images, typically associated to man-made structures, are compressible and we investigate two techniques exploiting that information to allow a compressive acquisition of the whole image. These techniques result in a significant enhancement of the image quality compared to classical compressed sensing. Moreover, compared to classical sampling and quantisation of the SAR raw data, they allow a significant reduction of bitrate with a limited increase of the distortion. However, their efficiency depends strongly on the presence of compressible parts in the image.

Circulant and Toeplitz Matrices in Compressed Sensing by Holger Rauhut. The abstract reads:

Compressed sensing seeks to recover a sparse vector from a small number of linear and non-adaptive measurements. While most work so far focuses on Gaussian or Bernoulli random measurements we investigate the use of partial random circulant and Toeplitz matrices in connection with recovery by `1-minization. In contrast to recent work in this direction we allow the use of an arbitrary subset of rows of a circulant and Toeplitz matrix. Our recovery result predicts that the necessary number of measurements to ensure sparse reconstruction by `1-minimization with random partial circulant or Toeplitz matrices scales linearly in the sparsity up to a log-factor in the ambient dimension. This represents a significant improvement over previous recovery results for such matrices. As a main tool for the proofs we use a new version of the non-commutative Khintchine inequality.

A quantitative criterion for selecting the optimal sparse representation of dynamic cardiac data in compresses MRI by Muhammad Usman, Philipp G. Batchelor. The abstract reads:

One of the important performance factors in compressed sensing (CS) reconstructions is the level of sparsity in sparse representation of the signal. The goal in CS is to find the sparsest representation of the underlying signal or image. However, for compressible or nearly sparse signals such as dynamic cardiac MR data, the quantification of sparsity is quite subjective due to issues such as dropped SNR or low contrast to noise ratio (CNR) in sparse domains such as x-f space or temporal difference domains. Hence, we need a criterion to compare different sparse representations of compressible signals. In this paper, we define a model that can fit the decay of practical compressible signals and as an application; we verify that this model can be used as a basis for selecting the optimal sparse representation of dynamic cardiac MR data.

Structured Sparsity: from Mixed Norms to Structured Shrinkage by Matthieu Kowalski, Bruno Torrésani. The abstract reads:

Sparse and structured signal expansions on dictionaries can be obtained through explicit modeling in the coefficient domain. The originality of the present contribution lies in the construction and the study of generalized shrinkage operators, whose goal is to identify structured significance maps. These generalize Group LASSO and the previously introduced Elitist LASSO by introducing more flexibility in the coefficient domain modeling. We study experimentally the performances of corresponding shrinkage operators in terms of significance map estimation in the orthogonal basis case. We also study their performance in the overcomplete situation, using iterative thresholding.

Sparse filter models for solving permutation indeterminacy in convolutive blind source separation by Prasad Sudhakar, Rémi Gribonval. The abstract reads:

Frequency-domain methods for estimating mixing filters in convolutive blind source separation (BSS) suffer from permutation and scaling indeterminacies in sub-bands. Solving these indeterminacies are critical to such BSS systems. In this paper, we propose to use sparse filter models to tackle the permutation problem. It will be shown that the ℓ1-norm of the filter matrix increases with permutations and with this motivation, an algorithm is then presented which aims to solve the permutations in the absence of any scaling. Experimental evidence to show the behaviour of ℓ1-norm of the filter matrix to sub-band permutations is presented. Then, the performance of our proposed algorithm is presented, both in noiseless and noisy cases.

Minimization of a sparsity promoting criterion for the recovery of complex-valued signalsby Lotfi Chaâri, Jean-Christophe Pesquet, Amel Benazza-Benyahia, Philippe Ciuciu. The abstract reads:

Ill-conditioned inverse problems are often encountered in signal/image processing. In this respect, convex objective functions including a sparsity promoting penalty term can be used. However, most of the existing optimization algorithms were developed for real-valued signals. In this paper, we are interested in complex-valued data. More precisely, we consider a class of penalty functions for which the associated regularized minimization problem can be solved numerically by a forward-backward algorithm. Functions within this class can be used to promote the sparsity of the solution. An application to parallel Magnetic Resonance Imaging (pMRI) reconstruction where complex-valued images are reconstructed is considered.

A Compressed Sensing Approach for Biological Microscopy Image Denoisingby Marcio M. Marim, Elsa D. Angelini , Jean-Christophe Olivo-Marin. The abstract reads:

Compressed Sensing (CS) provides a new framework for signal sampling, exploiting redundancy and sparsity in incoherent bases. For images with homogeneous objects and background, CS provides an optimal reconstruction framework from a set of random projections in the Fourier domain, while constraining bounded variations in the spatial domain. In this paper, we propose a CS-based method to simultaneously acquire and denoise data based on statistical properties of the CS optimality, signal modeling and characteristics of noise reconstruction. Our approach has several advantages over traditional denoising methods, since it can under-sample, recover and denoise images simultaneously. We demonstrate with simulated and practical experiments on fluorescence images that we obtain images with similar or increased SNR even with reduced exposure times. Such results open the gate to new mathematical imaging protocols, offering the opportunity to reduce exposure time along with photo-toxicity and photo-bleaching and assist biological applications relying on fluorescence microscopy.

Phase Transitions for Restricted Isometry Properties by Jeffrey D. Blanchard , Coralia Cartis , Jared Tanner. The abstract reads:

Currently there is no framework for the transparent comparison of sparse approximation recoverability results derived using different methods of analysis. We cast some of the most recent recoverability results for `1-regularization in terms of the phase transition framework advocated by Donoho. To allow for quantitative comparisons across different methods of analysis a particular random matrix ensemble must be selected; here we focus on Gaussian random matrices. Methods of analysis considered include the Restricted Isometry Property of Cand`es and Tao, geometric covering arguments of Rudelson and Vershynin, and convex polytopes formulations of Donoho.

Sparsity Measure and the Detection of Significant Data by Abdourrahmane Atto, Dominique Pastor, Grégoire Mercier. The abstract reads:

The paper provides a formal description of the sparsity of a representation via the detection thresholds. The formalism proposed derives from theoretical results about the detection of significant coefficients when data are observed in presence of additive white Gaussian noise. The detection thresholds depend on two parameters describing the sparsity degree for the representation of a signal. The standard universal and minimax thresholds correspond to detection thresholds associated with different sparsity degrees.

Sparsity hypotheses for robust estimation of the noise standard deviation in various signal processing applications by Dominique Pastor, Francois-Xavier Socheleau, Abdeldjalil Aïssa-El-Bey. The abstract reads:

This paper concerns the problem of estimating the noise standard deviation in different signal processing applications. The presented estimator derives from recent results in robust statistics based on sparsity hypotheses. More specifically, these theoretical results make the link between a standard problem in robust statistics (the estimation of the noise standard deviation in presence of outliers) and sparsity hypotheses. The estimator derived from these theoretical results can be applied to different signal processing applications where estimation of the noise standard deviation is crucial. In the present paper, we address speech denoising and Orthogonal Frequency Division Multiple Access (OFDMA). A relevant application should also be Communication Electronic Support (CES). For such applications, the algorithm proposed is a relevant alternative to the median absolute deviation (MAD) estimator.

Sparse Super-Resolution with Space Matching Pursuits by Guoshen Yu , Stéphane Mallat. The abstract reads:

Super-resolution image zooming is possible when the image has some geometric regularity. Directional interpolation algorithms are used in industry, with ad-hoc regularity measurements. Sparse signal decompositions in dictionaries of curvelets or bandlets find indirectly the directions of regularity by optimizing the sparsity. However, super-resolution interpolations in such dictionaries do not outperform cubic spline interpolations. It is necessary to further constraint the sparse representation, which is done through projections over structured vector spaces. A space matching pursuit algorithm is introduced to compute image decompositions over spaces of bandlets, from which a super-resolution image zooming is derived. Numerical experiments illustrate the efficiency of this super-resolution procedure compared to cubic spline interpolations.

How to use the iterative hard thresholding algorithm by Thomas Blumensath, Michael E Davies. The abstract reads:

Several computationally efficient algorithms have been shown to offer near optimal recovery of sparse signals from a small number of linear measurements. However, whilst many of the methods have similar guarantees whenever the measurements satisfy the so called restricted isometry property, empirical performance of the methods can vary significantly in a regime in which this condition is not satisfied. We here modify the Iterative Hard Thresholding algorithm by including an automatic step-size calculation. This makes the method independent from an arbitrary scaling of the measurement system and leads to a method that shows state of the art empirical performance. What is more, theoretical guarantees derived for the unmodified algorithm carry over to the new method with only minor changes.

Freely Available, Optimally Tuned Iterative Thresholding Algorithms for Compressed Sensing by Arian Maleki , David L. Donoho. The abstract reads:

We conducted an extensive computational experiment, lasting multiple CPU-years, to optimally select parameters for important classes of algorithms for finding sparse solutions of underdetermined systems of linear equations. We make the optimally tuned implementations freely available at sparselab.stanford.edu; they can be used 'out of the box' with no user input: it is not necessary to select thresholds or know the likely degree of sparsity. Our class of algorithms includes iterative hard and soft thresholding with or without relaxation, as well as CoSaMP, Subspace Pursuit and some natural extensions. As a result, our optimally tuned algorithms dominate such proposals. Our notion of optimality is defined in terms of phase transitions, i.e. we maximize the number of nonzeros at which the algorithm can successfully operate. We show that the phase transition is a well-defined quantity with our suite of random underdetermined linear systems. Our tuning gives the highest transition possible within each given class of algorithms. We verify by extensive computation the robustness of our recommendations to the amplitude distribution of the nonzero coefficients as well as the matrix ensemble defining the underdetermined system. Several specific findings are established. (a) For all algorithms the worst amplitude distribution for nonzeros is generally the constantamplitude random-sign distribution; where all nonzeros are the same size. (b) Various random matrix ensembles give the same phase transitions; random partial isometries give different transitions and require different tuning; (c) Optimally tuned Subspace Pursuit dominates optimally tuned CoSaMP, particularly so when the system is almost square. (d) For randomly decimated partial Fourier transform sampling, our recommended Iterative Soft Thresholding gives extremely good performance, making more complex algorithms like CoSaMP and Subspace Pursuit relatively pointless.

Exploiting the Sparsity of the Sinusoidal Model Using Compressed Sensing for Audio Codingby Anthony Griffin 1, Christos Tzagkarakis 1, Toni Hirvonen 1, Athanasios Mouchtaris 1, Panagiotis Tsakalides. The abstract reads:

Audio signals are represented via the sinusoidal model as a summation of a small number of sinusoids. This approach introduces sparsity to the audio signals in the frequency domain, which is exploited in this paper by applying Compressed Sensing (CS) to this sparse representation. CS allows sampling of signals at a much lower rate than the Nyquist rate if they are sparse in some basis. In this manner, a novel sinusoidal audio coding approach is proposed, which differs in philosophy from current state-of-the-art methods which encode the sinusoidal parameters (amplitude, frequency, phase) directly. It is shown here that encouraging results can be obtained by this approach, although inferior at this point compared to state-of-the-art. Several practical implementation issues are discussed, such as quantization of the CS samples, frequency resolution vs. coding gain, error checking, etc., and directions for future research in this framework are proposed.

Sparse representations versus the matched filter by F. Martinelli, J.L. Sanz. The abstract reads:

We have considered the problem of detection and estimation of compact sources immersed in a background plus instrumental noise. Sparse approximation to signals deals with the problem of finding a representation of a signal as a linear combination of a small number of elements from a set of signals called dictionary. The estimation of the signal leads to a minimization problem for the amplitude associated to the sources. We have developed a methodology that minimizes the lp-norm with a constraint on the goodness-of-fit and we have compared different norms against the matched filter.

Image Restoration with Compound Regularization Using a Bregman Iterative Algorithmby Manya V. Afonso, José M. Bioucas-Dias, Mario A. T. Figueiredo. The abstract reads:

Some imaging inverse problems may require the solution to simultaneously exhibit properties that are not enforceable by a single regularizer. One way to attain this goal is to use a linear combinations of regularizers, thus encouraging the solution to simultaneously exhibit the characteristics enforced by each individual regularizer. In this paper, we address the optimization problem resulting from this type of compound regularization using the split Bregman iterative method. The resulting algorithm only requires the ability to efficiently compute the denoising operator associated to each in- volved regularizer. Convergence is guaranteed by the theory behind the Bregman iterative approach to solving constrained optimization problems. In experiments with images that are simultaneously sparse and piece-wise smooth, the proposed algorithm successfully solves the deconvolution problem with a compound regularizer that is the linear combination of the `1 and total variation (TV) regularizers. The lowest MSE obtained with the (`1+TV) regularizer is lower than that obtained with TV or `1 alone, for any value of the corresponding regularization parameters.

Sparse Coding and Automatic Relevance Determination for Multi-way models by Morten Mørup, Lars Kai Hansen. The abstract reads:

Multi-way modeling has become an important tool in the analysis of large scale multi-modal data. An important class of multi-way models is given by the Tucker model which decomposes the data into components pertaining to each modality as well as a core array indicating how the components of the various modalities interact. Unfortunately, the Tucker model is not unique. Furthermore, establishing the adequate model order is difficult as the number of components are specified for each mode separately. Previously, rotation criteria such as VARIMAX has been used to resolve the non-uniqueness of the Tucker representation [7]. Furthermore, all potential models have been exhaustively evaluated to estimate the adequate number of components of each mode. We demonstrate how sparse coding can prune excess components and resolve the non-uniqueness of the Tucker model while Automatic Relevance Determination in Bayesian learning form a framework to learn the adequate degree of sparsity imposed. On a wide range of multi-way data sets the proposed method is demonstrated to successfully prune excess components thereby establishing the model order. Furthermore, the non-uniqueness of the Tucker model is resolved since among potential models the models giving the sparsest representation as measured by the sparse coding regularization is attained. The approach readily generalizes to regular sparse coding as well as the CandeComp/PARAFAC model as both models are special cases of the Tucker model.

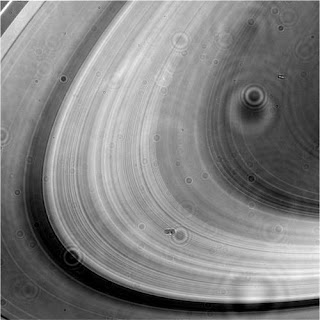

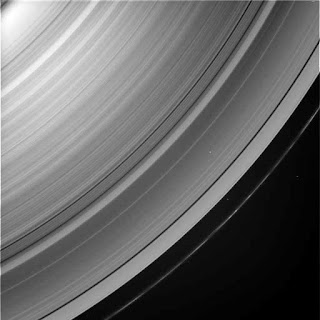

Credit Photo: NASA. View of the Earth from the International Space Station.