Today we have a long and incomplete list of new papers for the week-end to read, enjoy.

Magnetic Resonance Imaging (MRI) can elucidate the interior structure of an optically opaque object in unparalleled detail but is ultimately limited by the need to enclose the object within a detection coil; acquiring the image with increasingly smaller pixels reduces the sensitivity because each pixel occupies a proportionately smaller fraction of the detector’s volume. Here, we overcome this limitation using remotely detected MRI: images of fluids flowing in channel assemblies are encoded into the phase and intensity of the constituent molecules’ nuclear magnetic resonance signals and then decoded by a volume-matched detector after the fluids flow out of the sample. We thus accelerate zoomed in MRI acquisition in microfluidic devices by 106, obtaining microscopic images of flow and velocity distributions. Our results illustrate the facile integration of MRI with microfluidic assays and suggest generalizations to other systems involving microscopic flow.

Robust PCA via Outlier Pursuit by

Huan Xu,

Constantine Caramanis,

Sujay Sanghavi. The abstract reads:

Singular Value Decomposition (and Principal Component Analysis) is one of the most widely used techniques for dimensionality reduction: successful and efficiently computable, it is nevertheless plagued by a well-known, well-documented sensitivity to outliers. Recent work has considered the setting where each point has a few arbitrarily corrupted components. Yet, in applications of SVD or PCA such as robust collaborative filtering or bioinformatics, malicious agents, defective genes, or simply corrupted or contaminated experiments may effectively yield entire points that are completely corrupted.

We present an efficient convex optimization-based algorithm we call Outlier Pursuit, that under some mild assumptions on the uncorrupted points (satisfied, e.g., by the standard generative assumption in PCA problems) recovers the exact optimal low-dimensional subspace, and identifies the corrupted points. Such identification of corrupted points that do not conform to the low-dimensional approximation, is of paramount interest in bioinformatics and financial applications, and beyond. Our techniques involve matrix decomposition using nuclear norm minimization, however, our results, setup, and approach, necessarily differ considerably from the existing line of work in matrix completion and matrix decomposition, since we develop an approach to recover the correct column space of the uncorrupted matrix, rather than the exact matrix itself.

A commenter in the previous entry thought I had missed this one, but fear not, I was running an experiment in the past two days. Stay tuned for the results. Here is the paper:

Sparse and silent coding in neural circuits by

András L\Horincz, Zsolt Palotai,

Gábor Szirtes. The abstract reads:

Sparse coding algorithms are about finding a linear basis in which signals can be represented by a small number of active (non-zero) coefficients. Such coding has many applications in science and engineering and is believed to play an important role in neural information processing. However, due to the computational complexity of the task, only approximate solutions provide the required efficiency (in terms of time). As new results show, under particular conditions there exist efficient solutions by minimizing the magnitude of the coefficients (`$l_1$-norm') instead of minimizing the size of the active subset of features (`$l_0$-norm'). Straightforward neural implementation of these solutions is not likely, as they require \emph{a priori} knowledge of the number of active features. Furthermore, these methods utilize iterative re-evaluation of the reconstruction error, which in turn implies that final sparse forms (featuring `population sparseness') can only be reached through the formation of a series of non-sparse representations, which is in contrast with the overall sparse functioning of the neural systems (`lifetime sparseness'). In this article we present a novel algorithm which integrates our previous `$l_0$-norm' model on spike based probabilistic optimization for sparse coding with ideas coming from novel `$l_1$-norm' solutions.

The resulting algorithm allows neurally plausible implementation and does not require an exactly defined sparseness level thus it is suitable for representing natural stimuli with a varying number of features. We also demonstrate that the combined method significantly extends the domain where optimal solutions can be found by `$l_1$-norm' based algorithms.

At some point, we will figure out that some neural diseases are because some neural structure are just not competent enough in solving problems the l_1 way but are struck with an l_0 approach.....The day some results like this is published, I'll buy lunch to the authors :-)

Following on a similar thread here is:

Fast Inference in Sparse Coding Algorithms with Applications to Object Recognition by

Koray Kavukcuoglu,

Marc'Aurelio Ranzato,

Yann LeCun. The abstarct reads:

Adaptive sparse coding methods learn a possibly overcomplete set of basis functions, such that natural image patches can be reconstructed by linearly combining a small subset of these bases. The applicability of these methods to visual object recognition tasks has been limited because of the prohibitive cost of the optimization algorithms required to compute the sparse representation. In this work we propose a simple and efficient algorithm to learn basis functions. After training, this model also provides a fast and smooth approximator to the optimal representation, achieving even better accuracy than exact sparse coding algorithms on visual object recognition tasks.

Efficient Matrix Completion with Gaussian Models by Flavien Léger,

Guoshen Yu,

Guillermo Sapiro. The abstract reads:

A general framework based on Gaussian models and a MAP-EM algorithm is introduced in this paper for solving matrix/table completion problems. The numerical experiments with the standard and challenging movie ratings data show that the proposed approach, based on probably one of the simplest probabilistic models, leads to the results in the same ballpark as the state-of-the-art, at a lower computational cost.

Adaptive Compressed Image Sensing Using Dictionaries by

Amir Averbuch,

Shai Dekel and Shay Deutsch. The abstract reads:

In recent years, the theory of Compressed Sensing has emerged as an alternative for the Shannon sampling theorem, suggesting that compressible signals can be reconstructed from far fewer samples than required by the Shannon sampling theorem. In fact the theory advocates that non-adaptive, ‘random’ functionals are in some sense optimal for this task. However, in practice Compressed Sensing is very difficult to implement for large data sets, since the algorithms are exceptionally slow and have high memory consumption. In this work, we present a new alternative method for simultaneous image acquisition and compression called Adaptive Compressed Sampling. Our approach departs fundamentally from the (non adaptive) Compressed Sensing mathematical framework by replacing the ‘universal’ acquisition of incoherent measurements with a direct and fast method for adaptive transform coefficient acquisition. The main advantages of this direct approach are that no complex recovery algorithm is in fact needed and that it allows more control over the compressed image quality, in particular, the sharpness of edges. Our experimental results show that our adaptive algorithms perform better than existing non-adaptive methods in terms of image quality and speed.

Random Access Compressed Sensing in Underwater Sensor Networks by

Fatemeh Fazel,

Maryam Fazel,

Milica Stojanovic. The abstract reads:

In this paper, we propose a power-efficient underwater sensor network scheme employing compressed sensing and random channel access. The proposed scheme is suitable for applications where a large number of sensor nodes are deployed uniformly over a certain area to measure a physical phenomenon. The underlying assumption is that most physical phenomena have sparse representations in the frequency domain. The network is assumed to have a Fusion Center (FC) that collects the observations of sensor nodes and reconstructs the measured field based on the obtained measurements. The proposed method is completely decentralized, i.e., sensor nodes act independently without the need for coordination with each other or with the FC. During each frame, a Bernoulli random generator at each node determines whether the node participates in sampling or stays inactive during that sampling period. If selected, it measures the physical quantity of interest, e.g. temperature. A second random generator with a uniform distribution then picks a (random) delay for the node to send its data to the FC. The proposed network scheme, referred to as Random Access Compressed Sensing (RACS), results in a simple power-efficient design, for: a) it eliminates the need for duplexing, which requires coordination from the FC; b) there is no need for acknowledgment packets and retransmissions in case packets collide; and moreover, c) it is efficient in terms of the communication resources used (only a small fraction of nodes sample and transmit in each sampling period).

Static feature-specific imaging (SFSI), where the measurement basis remains fixed/static during the data measurement process, has been shown to be superior to conventional imaging for reconstruction tasks. Here, we describe an adaptive approach that utilizes past measurements to inform the choice of measurement basis for future measurements in an FSI system, with the goal of maximizing the reconstruction fidelity while employing the fewest measurements. An algorithm to implement this adaptive approach is developed for FSI systems, and the resulting systems are referred to as adaptive FSI (AFSI) systems. A simulation study is used to analyze the performance of the AFSI system for two choices of measurement basis: principal component (PC) and Hadamard. Here, the root mean squared error (RMSE) metric is employed to quantify the reconstruction fidelity. We observe that an AFSI system achieves as much as 30% lower RMSE compared to an SFSI system. The performance improvement of the AFSI systems is verified using an experimental setup employed using a digital micromirror device (DMD) array.

If you recall

Mark Neifeld made a presentation on the same subject at the

Duke-AFRL Meeting a year and a half ago entitled

Adaptation for Task-Specific Compressive Imaging that featured this slide:

The attendant video of this presentation is

here.

In sensor networks, energy efficient data manipulation/ transmission is very important for data gathering, due to significant power constraints on the sensors. As a potential solution, Compressed Sensing (CS) has been proposed, because it requires capturing a smaller number of samples for successful reconstruction of sparse data. Traditional CS does not take explicitly into consideration the cost of each measurement (it simply tries to minimize the number of measurements), and this ignores the need to transport measurements over the sensor network. In this paper, we study CS approaches for sensor networks that are spatially-localized, thus reducing the cost of data gathering. In particular, we study the reconstruction accuracy properties of a new distributed measurement system

that constructs measurements within spatially-localized clusters. We first introduce the concept of maximum energy overlap between clusters and basis functions ( ), and show that can be used to estimate the minimum number of measurements required for accurate reconstruction. Based on this metric, we propose a centralized iterative algorithm for joint optimization of the energy overlap and distance between sensors in each cluster. Our simulation results show that we can achieve significant savings in transport cost with small reconstruction error.

Compressed sensing allows perfect recovery of sparse signals (or signals sparse in some basis) using only a small number of random measurements. Existing results in compressed sensing literature have focused on characterizing the achievable performance by bounding the number of samples required for a given level of signal sparsity. However, using these bounds to minimize the number of samples requires a-priori knowledge of the sparsity of the unknown signal, or the decay structure for near-sparse signals. Furthermore, there are some popular recovery methods for which no such bounds are known. In this paper, we investigate an alternative scenario where observations are available in sequence. For any recovery method, this means that there is now a sequence of candidate reconstructions. We propose a method to estimate the reconstruction error directly from the samples themselves, for every candidate in this sequence. This estimate is universal in the sense that it is based only on the measurement ensemble, and not on the recovery method or any assumed level of sparsity of the unknown signal. With these estimates, one can now stop observations as soon as there is reasonable certainty of either exact or sufficiently accurate reconstruction. They also provide a way to obtain “run-time” guarantees for recovery methods that otherwise lack a-priori performance bounds. We investigate both continuous (e.g. Gaussian) and discrete (e.g. Bernoulli or Fourier) random measurement ensembles, both for exactly sparse and general near-sparse signals, and with both noisy and noiseless measurements.

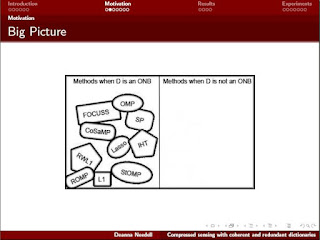

The google found the following two presentations:

Finally,

Michael Friedlander,

Felix Herrmann and

Ozgur Yilmaz have organized the following session at the upcoming 2010 CMS meeting in Vancouver:

Compressed Sensing: Theory, Algorithms and Application There abstracts are listed below:

- Lorne Applebaum - Multiuser Detection in Asynchronous On--Off Random Access Channels Using Lasso : We consider on--off random access channels where users transmit either a one or a zero to a base station. Such channels represent an abstraction of control channels used for scheduling requests in third-generation cellular systems and uplinks in wireless sensor networks deployed for target detection. A key characteristic of these systems is their asynchronous nature. We will introduce a Lasso-based scheme for multiuser detection in asynchronous on--off random access channels that does not require knowledge of the delays or the instantaneous received signal-to-noise ratios of the individual users at the base station. For any fixed maximum delay in the system, the proposed scheme allows an exponential number of total users with respect to code length---achieving almost the same problem dimension scaling relationships as that required in the ideal case of fully synchronous channels. Further, the computational complexity of the proposed scheme differs from that of a similar oracle-based scheme with perfect knowledge of the user delays by at most a log factor. The results presented here are non-asymptotic, in contrast to previous work for synchronous channels that only guarantees that the probability of error at the base station goes to zero asymptotically with the number of users. Finally, we give a deterministic code construction suitable for the delay agnostic system. The code construction is based on a cyclic code in which equivalence classes are assigned to users. The code's low coherence permits recovery guarantees with Lasso.

- Stephen Becker - First-order methods for constrained linear inverse problems : Many algorithms have recently been proposed to solve the unconstrained forms of linear inverse problems, but few algorithms solve the constrained form. We show a general framework for solving constrained problems that applies to all problems of interest to compressed sensing. The technique is based on smoothing and solving the dual formulation. Using this method, it is possible to solve problems such as the Dantzig Selector, or composite problems such as minimizing a combination of the TV norm and weighted ℓ1 norm. Additionally, we discuss recent results about exact regularization and about accelerated continuation. http://arxiv.org/abs/1009.2065

- Jim Burke - Sparsity and Nonconvex Nonsmooth Optimization : Sparsity (or parsimonious) optimization is a framework for examining the trade-off between optimality and the number independent variables to optimize over. Much of this framework was first developed for application to a range of problems in statistics where the goal is to explain as much of a data as possible with the fewest number of explanatory variables. Within the past few years, the general methodology of sparsity optimization has found its way into a wide range of applications. In this talk we consider a general sparsity optimization framework for arbitrary extended real-valued objective functions that are continuous on their essential domains. General approximation properties for the associated sparsity optimization problem are given, and a fixed point iteration for the subdifferential inclusion identifying approximate stationarity is developed. Convergence results and numerical experiments are presented.

- Mike Davies - Rank Aware Algorithms for Joint Sparse Recovery : This talk will revisit the sparse multiple measurement vector (MMV) problem, where the aim is to recover a set of jointly sparse multichannel vectors from incomplete measurements. This problem has received increasing interest as an extension of single channel sparse recovery, which lies at the heart of the emerging field of compressed sensing. However, MMV approximation also has links with work on direction of arrival estimation in the field of array signal. Inspired by these links, we consider a new family of MMV algorithms based on the well-know MUSIC method in array processing highlighting the role of the rank of the unknown signal matrix in determining the difficulty of the recovery problem. We will show that the recovery conditions for our new algorithms take into account the observed rank of the signal matrix. This is in contrast to previously proposed techniques such as Simultaneous Orthogonal Matching Pursuit (SOMP) and mixed norm minimization techniques which we show to be effectively blind to the rank information. Numerical simulations demonstrate that our new rank aware techniques are significantly better than existing methods in dealing with multiple measurements.

- Laurent Demanet - Fitting matrices from applications to random vectors : What can be determined about the inverse A−1 of a matrix A from one application of A to a vector of random entries? If the n-by-n inverse A−1 belongs to a specified linear subspace of dimension p, then come to the talk to hear which assumptions on this subspace, p, and n, guarantee an accurate recovery of A−1 with high probability. This randomized fitting method provides a compelling preconditioner for the wave-equation Hessian (normal operator) in seismic imaging. Joint work with Pierre-David Letourneau (Stanford) and Jiawei Chiu (MIT).

- Yonina Eldar - Xampling: Analog-to-digital at Sub-Nyquist rates : Signal processing methods have changed substantially over the last several decades. The number of operations that are shifted from analog to digital is constantly increasing. While technology advances enable mass processing of huge data streams, the acquisition capabilities do not scale sufficiently fast so that the conversion to digital has become a serious bottleneck. For some applications, the maximal frequency of the input signals, which dictates the Nyquist rate, already exceeds the possible rates achievable with existing devices. In this talk, we present a new framework for sampling wideband analog signals at rates far below that dictated by the Nyquist rate. We refer to this methodology as Xampling: A combination of compression and sampling, performed simultaneously. Xampling merges results from standard sampling theory with recent developments in the field of compressed sensing in order to directly sample a wide class of analog signals at very low rates using existing hardware devices.

We begin by introducing the Xampling methodology. We then consider some specific examples including low rate sampling of multiband signals with applications to cognitive radio, recovery of time delays from low rate samples with applications to ultrasound, and high resolution radar.

- Gitta Kutyniok - Geometric Separation by Single-Pass Alternating Thresholding : Modern data is customarily of multimodal nature, and analysis tasks typically require separation into the single components such as, for instance, in neurobiological imaging a separation into spines (pointlike structures) and dendrites (curvilinear structures). Although a highly ill-posed problem, inspiring empirical results show that the morphological difference of these components sometimes allows a very precise separation. In this talk we will present a theoretical study of the separation of a distributional model situation of point- and curvilinear singularities exploiting a surprisingly simple single-pass alternating thresholding method applied to wavelets and shearlets as two complementary frames. Utilizing the fact that the coefficients are clustered geometrically in the chosen frames, we prove that at sufficiently fine scales arbitrarily precise separation is possible. Surprisingly, it turns out that the thresholding index sets even converge to the wavefront sets of the point- and curvilinear singularities in phase space and that those wavefront sets are perfectly separated by the thresholding procedure. Main ingredients of our analysis are the novel notion of cluster coherence and a microlocal analysis viewpoint.

This is joint work with David Donoho (Stanford U.).

- Zhaosong Lu - Penalty Decomposition Methods for Rank and l0-Norm Minimization : In the first part of this talk, we consider general rank minimization problems. We first show that a class of matrix optimization problems can be solved as lower dimensional vector optimization problems. As a consequence, we establish that a class of rank minimization problems have closed form solutions. Using this result, we then propose penalty decomposition (PD) methods for general rank minimization problems in which each subproblem is solved by a block coordinate descend method. Under some suitable assumptions, we show that any accumulation point of the sequence generated by our method when applied to the rank constrained minimization problem is a stationary point of a nonlinear reformulation of the problem. In the second part, we consider general l0-norm minimization problems. we first reformulate the l0-norm constrained problem as an equivalent rank minimization problem and then apply the above PD method to solve the latter problem. Further, by utilizing the special structures, we obtain a PD method that only involves vector operations. Under some suitable assumptions, we establish that any accumulation point of the sequence generated by the PD method satisfies a first-order optimality condition that is generally stronger than one natural optimality condition. Finally, we test the performance of our PD methods on matrix completion, nearest low-rank correlation matrix, sparse logistic regression and sparse inverse covariance selection problems. The computational results demonstrate that our methods generally outperform the existing methods in terms of solution quality and/or speed.

- Hassan Mansour - Recovery of Compressively Sampled Signals Using Partial Support Information : In this talk, we discuss the recovery conditions of weighted ℓ1 minimization for signal reconstruction from compressed sensing measurements when partial support information is available. We show that if at least 50% of the (partial) support information is accurate, then weighted ℓ1 minimization is stable and robust under weaker conditions than the analogous conditions for standard ℓ1 minimization. Moreover, weighted ℓ1 minimization provides better bounds on the reconstruction error in terms of the measurement noise and the compressibility of the signal to be recovered. We illustrate our results with extensive numerical experiments on synthetic as well as audio and video signals.

- Ali Pezeshki - Sense and Sensitivity: Model Mismatch in Compressed Sensing : We study the sensitivity of compressed sensing to mismatch between the assumed and the actual models for sparsity. We start by analyzing the effect of model mismatch on the best k-term approximation error, which is central to providing exact sparse recovery guarantees. We establish achievable bounds for the ℓ1 error of the best k-term approximation and show that these bounds grow linearly with the signal dimension and the mismatch level between the assumed and actual models for sparsity. We then derive bounds, with similar growth behavior, for the basis pursuit ℓ1 recovery error, indicating that the sparse recovery may suffer large errors in the presence of model mismatch. Although, we present our results in the context of basis pursuit, our analysis applies to any sparse recovery principle that relies on the accuracy of best k-term approximations for its performance guarantees. We particularly highlight the problematic nature of model mismatch in Fourier imaging, where spillage from off-grid DFT components turns a sparse representation into an incompressible one. We substantiate our mathematical analysis by numerical examples that demonstrate a considerable performance degradation for image inversion from compressed sensing measurements in the presence of model mismatch. \medskip This is joint work with Yuejie Chi, Louis Scharf, and Robert Calderbank.

- Holger Rauhut - Recovery of functions in high dimensions via compressive sensing : Compressive sensing predicts that sparse vectors can be recovered efficiently from highly undersampled measurements. It is known in particular that multivariate sparse trigonometric polynomials can be recovered from a small number of random samples. Classical methods for recovering functions in high spatial dimensions usually suffer the curse of dimension, that is, the number of samples scales exponentially in the dimension (the number of variables of the function). We introduce a new model of functions in high dimensions that uses "sparsity with respect to dimensions". More precisely, we assume that the function is very smooth in most of the variables, and is allowed to be rather rough in only a small but unknown set of variables. This translates into a certain sparsity model on the Fourier coefficients. Using techniques from compressive sensing, we are able to recover functions in this model class efficiently from a small number of samples. In particular, this number scales only logarithmically in the spatial dimension - in contrast to the exponential scaling in classical methods.

- Benjamin Recht - The Convex Geometry of Inverse Problems : Building on the success of generalizing compressed sensing to matrix completion, this talk discusses progress on further extending the catalog of objects and structures that can be recovered from partial information. I will focus on a suite of data analysis algorithms designed to decompose signals into sums of atomic signals from a simple but not necessarily discrete set. These algorithms are derived in a convex optimization framework that encompasses previous methods based on ℓ1-norm minimization and nuclear norm minimization for recovering sparse vectors and low-rank matrices. I will discuss general recovery guarantees and implementation schemes for this suite of algorithms and will describe several example classes of atoms and applications.

- Justin Romberg - Random coding for forward modeling : Compressed sensing has shown us that sparse signal acquisition can be made efficient by injecting randomness into the measurement process. In this talk, we will show how these same ideas can be used to dramatically reduce the computation required for two types of simulation problems in acoustics. In the first, we show how all of the channels in a multiple-input multiple-output system can be acquired jointly by simultaneously exciting all of the inputs with different random waveforms, and give an immediate application to seismic forward modeling. In the second, we consider the problem of acoustic source localization in a complicated channel. We show that the amount of computation to perform ``matched field processing'' (matched filtering) can be reduced by precomputing the response of the channel to a small number of dense configurations of random sources.

- Rayan Saab - Sobolev Duals of Random Frames and Sigma-Delta Quantization for Compressed Sensing : Compressed sensing, as a signal acquisition technique, has been shown to be highly effective for dimensionality reduction. On the other hand, quantization of compressed sensing measurements has been a relatively under-addressed topic. In particular, the results of Candes, Romberg and Tao, and of Donoho guarantee that if a uniform quantizer of step size δ is used to quantize m measurements y=Φx of a k-sparse signal x∈RN, where Φ satisfies the restricted isometry property, then the reconstruction error via ℓ1-minimization is O(δ). This is the simplest and most commonly assumed approach for quantization of compressed sensing measurements. On the other hand, in this talk we show that if instead of uniform quantization, an rth order ΣΔ quantization scheme with the same output alphabet is used to quantize y, then there is an alternative recovery method via Sobolev dual frames which guarantees a reduction of the approximation error by a factor of (m/k)(r−1/2)α for any 0<α<1,

if m≳rk(logN)1/(1−α). The result holds with high probability on the initial draw of the measurement matrix Φ from the Gaussian distribution, and uniformly for all k-sparse signals x that satisfy a mild size condition on their supports.

- Thomas Strohmer - How sparsity can enrich wireless communications : We demonstrate how concepts from compressive sensing and random matrix theory can combat some challenging problems of next-generation wireless communication systems.

- Ewout van den Berg - Spot - A linear operator toolbox for Matlab : Spot is a Matlab toolbox for the construction, application, and manipulation of linear operators. One of the main achievements of the package is that it provides operators in such a way that they are as easy to work with as explicit matrices, while represented implicitly. This combines the benefits of explicit matrices with the scalability of implicit representations, thus clearing the way for fast prototyping with complex and potentially large linear operators. (This is joint work with Michael Friedlander.)

- Rachel Ward - New and improved Johnson-Lindenstrauss embeddings via the Restricted Isometry Property : The Johnson-Lindenstrauss (JL) Lemma states that any set of p points in high dimensional Euclidean space can be embedded into O(δ−2log(p)) dimensions, without distorting the distance between any two points by more than a factor between 1−δ and 1+δ. We establish a "near-equivalence" between the JL Lemma and the Restricted Isometry Property (RIP), a well-known concept in the theory of sparse recovery often used for showing the success of ℓ1-minimization. In particular, we show that matrices satisfying the Restricted Isometry of optimal order, with randomized column signs, provide optimal Johnson-Lindenstrauss embeddings up to a logarithmic factor in N. Our results have implications for dimensionality reduction and sparse recovery: on the one hand, we arrive at the best known bounds on the necessary JL embedding dimension for a wide class of structured random matrices; on the other hand, our results expose several new families of universal encoding strategies in compressed sensing. This is joint work with Felix Krahmer.

- Rebecca Willet - Compressed Sensing with Poisson Noise : Compressed sensing has profound implications for the design of new imaging and network systems, particularly when physical and economic limitations require that these systems be as small and inexpensive as possible. However, several aspects of compressed sensing theory are inapplicable to real-world systems in which noise is signal-dependent and unbounded. In this work we discuss some of the key theoretical challenges associated with the application of compressed sensing to practical hardware systems and develop performance bounds for compressed sensing in the presence of Poisson noise. We develop two novel sensing paradigms, based on either pseudo-random dense sensing matrices or expander graphs, which satisfy physical feasibility constraints. In these settings, as the overall intensity of the underlying signal increases, an upper bound on the reconstruction error decays at an appropriate rate (depending on the compressibility of the signal), but for a fixed signal intensity, the error bound actually grows with the number of measurements or sensors. This surprising fact is both proved theoretically and justified based on physical intuition.