When it came out in 1988, the Fast Multipole Method heralded a new era in science and engineering. Today's paper aims at doing the same for kernels used in any type of big data problems:

Structured Block Basis Factorization for Scalable Kernel Matrix Evaluation by Ruoxi Wang, Yingzhou Li, Michael W. Mahoney, Eric Darve

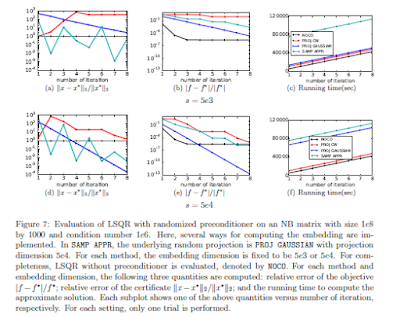

Kernel matrices are popular in machine learning and scientific computing, but they are limited by their quadratic complexity in both construction and storage. It is well-known that as one varies the kernel parameter, e.g., the width parameter in radial basis function kernels, the kernel matrix changes from a smooth low-rank kernel to a diagonally-dominant and then fully-diagonal kernel. Low-rank approximation methods have been widely-studied, mostly in the first case, to reduce the memory storage and the cost of computing matrix-vector products. Here, we use ideas from scientific computing to propose an extension of these methods to situations where the matrix is not well-approximated by a low-rank matrix. In particular, we construct an efficient block low-rank approximation method---which we call the Block Basis Factorization---and we show that it has O(n) complexity in both time and memory. Our method works for a wide range of kernel parameters, extending the domain of applicability of low-rank approximation methods, and our empirical results demonstrate the stability (small standard deviation in error) and superiority over current state-of-art kernel approximation algorithms.

No comments:

Post a Comment