Hi...

... One of our papers was published recently. It is about the sparsity-based random sampling and reconstruction of NMR spectra. NMR spectra analysis is an important tool for chemistry and data acquisition is slow for multidimensional NMR spectra. Here is the information for our work:

Xiaobo Qu, Di Guo, Xue Cao, Shuhui Cai, Zhong Chen. Reconstruction of self-sparse 2D NMR spectra from undersampled data in indirect dimension, Sensors, 11(9):8888-8909,2011.

Abstract: Reducing the acquisition time for two-dimensional nuclear magnetic resonance (2D NMR) spectra is important. One way to achieve this goal is reducing the acquired data. In this paper, under the framework of compressed sensing, we proposed to undersample the data in the indirect dimension for a type of self-sparse 2D NMR spectra, that is, only a few meaningful spectral peaks occupy partial locations, while the rest locations own very small or even no peaks. The spectrum is reconstructed by enforcing its sparsity in an identity matrix domain with Lp (p = 0.5) norm optimization algorithm. Both theoretical analysis and simulation results show that the proposed method can reduce the reconstruction error compared with the wavelet-based L1 norm optimization.Keywords: NMR; spectral reconstruction; sparsity; undersampling; compressed sensing

Project webpage: http://quxiaobo.8866.org/project/self-sparse%20NMR/ index.html

Highly related journal papers on application of sparsity-based reconstruction of NMR spectra:

- Drori, I. Fast l1 minimization by iterative thresholding for multidimensional NMR Spectroscopy. Eurasip J. Adv. Sig. Pr. 2007, Article ID 20248.

- Stern, A.S.; Donoho, D.L.; Hoch, J.C. NMR data processing using iterative thresholding and minimum l1-norm reconstruction. J. Magn. Reson. 2007, 188, 295-300.

- Kazimierczuk, K.; Orekhov, V.Y. Accelerated NMR spectroscopy by using compressed sensing. Angewandte Chemie International Edition 2011, 50, 5556-5559.

- Holland, D.J.; Bostock, M.J.; Gladden, L.F.; Nietlispach, D. Fast multidimensional NMR spectroscopy using compressed sensing.Angewandte Chemie International Edition 2011, 50, 6548-6551.

- Shrot, Y.; Frydman, L. Compressed sensing and the reconstruction of ultrafast 2D NMR data: Principles and biomolecular applications.J. Magn. Reson. 2011, 209, 352-358.

Thanks Xiaobo !

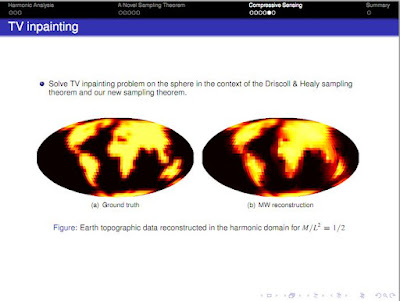

Also found on the interwebs two presentations by Jason McEwen

- Radio interferometric imaging with compressed sensing

- A novel sampling theorem on the sphere with implications for compressive sensing

Ok, let's call it the Donoho-Tanner phase transition once and for all, shall we ?

On Phase Transition of Compressed Sensing in the Complex Domain by Zai Yang, Cishen Zhang, Lihua Xie. The abstract reads:

The phase transition is a performance measure of the sparsity-undersampling tradeoff in compressed sensing (CS). This letter reports, for the first time, the existence of an exact phase transition for the $\ell_1$ minimization approach to the complex valued CS problem. This discovery is not only a complementary result to the known phase transition of the real valued CS but also shows considerable superiority of the phase transition of complex valued CS over that of the real valued CS. The results are obtained by extending the recently developed ONE-L1 algorithms to complex valued CS and applying their optimal and iterative solutions to empirically evaluate the phase transition.

Support Recovery of Sparse Signals in the Presence of Multiple Measurement Vectors by Yuzhe Jin, Bhaskar D. Rao. The abstract reads:

This paper studies the problem of support recovery of sparse signals based on multiple measurement vectors (MMV). The MMV support recovery problem is connected to the problem of decoding messages in a Single-Input Multiple-Output (SIMO) multiple access channel (MAC), thereby enabling an information theoretic framework for analyzing performance limits in recovering the support of sparse signals. Sharp sufficient and necessary conditions for successful support recovery are derived in terms of the number of measurements per measurement vector, the number of nonzero rows, the measurement noise level, and especially the number of measurement vectors. Through the interpretations of the results, in particular the connection to the multiple output communication system, the benefit of having MMV for sparse signal recovery is illustrated providing a theoretical foundation to the performance improvement enabled by MMV as observed in many existing simulation results. In particular, it is shown that the structure (rank) of the matrix formed by the nonzero entries plays an important role on the performance limits of support recovery.Here is an interesting trade-off study:

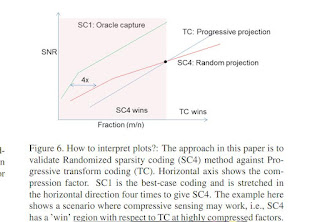

Progressive versus Random Projections for Compressive Capture of Images, Lightfields and Higher Dimensional Visual Signals by Rohit Pandharkar, Ashok Veeraraghavan, Ramesh Raskar. The abstract reads:

Progressive versus Random Projections for Compressive Capture of Images, Lightfields and Higher Dimensional Visual Signals by Rohit Pandharkar, Ashok Veeraraghavan, Ramesh Raskar. The abstract reads:Computational photography involves sophisticated capture methods. A new trend is to capture projection of higher dimensional visual signals such as videos, multi-spectral data and lightfields on lower dimensional sensors. Carefully designed capture methods exploit the sparsity of the underlying signal in a transformed domain to reduce the number of measurements and use an appropriate reconstruction method. Traditional progressive methods may capture successively more detail using a sequence of simple projection basis, such as DCT or wavelets and employ straightforward backprojection for reconstruction. Randomized projection methods do not use any specific sequence and use L0 minimization for reconstruction. In this paper, we analyze the statistical properties of natural images, videos, multi-spectral data and light-fields and compare the effectiveness of progressive and random projections. We define effectiveness by plotting reconstruction SNR against compression factor. The key idea is a procedure to measure best-case effectiveness that is fast, independent of specific hardware and independent of the reconstruction procedure. We believe this is the first empirical study to compare different lossy capture strategies without the complication of hardware or reconstruction ambiguity. The scope is limited to linear non-adaptive sensing. The results show that random projections produce significant advantages over other projections only for higher dimensional signals, and suggest more research to nascent adaptive and non-linear projection methods.

A simpler dictionary learning and decoder ?

A sparse coding model with synaptically local plasticity and spiking neurons can account for the diverse shapes of V1 simple cell receptive fields by Joel Zylberberg, Jason Timothy Murphy, Michael Robert DeWeese. The abstract reads:

Sparse coding algorithms trained on natural images can accurately predict the features that excite visual cortical neurons, but it is not known whether such codes can be learned using biologically realistic plasticity rules. We have developed a biophysically motivated spiking network, relying solely on synaptically local information, that can predict the full diversity of V1 simple cell receptive field shapes when trained on natural images. This represents the first demonstration that sparse coding principles, operating within the constraints imposed by cortical architecture, can successfully reproduce these receptive fields. We further prove, mathematically, that sparseness and decorrelation are the key ingredients that allow for synaptically local plasticity rules to optimize a cooperative, linear generative image model formed by the neural representation. Finally, we discuss several interesting emergent properties of our network, with the intent of bridging the gap between theoretical and experimental studies of visual cortex.

A Probabilistic Framework for Discriminative Dictionary Learning by Bernard Ghanem, Narendra Ahuja. The abstract reads:

In this paper, we address the problem of discriminative dictionary learning (DDL), where sparse linear representation and classification are combined in a probabilistic framework. As such, a single discriminative dictionary and linear binary classifiers are learned jointly. By encoding sparse representation and discriminative classification models in a MAP setting, we propose a general optimization framework that allows for a data-driven tradeoff between faithful representation and accurate classification. As opposed to previous work, our learning methodology is capable of incorporating a diverse family of classification cost functions (including those used in popular boosting methods), while avoiding the need for involved optimization techniques. We show that DDL can be solved by a sequence of updates that make use of well-known and well-studied sparse coding and dictionary learning algorithms from the literature. To validate our DDL framework, we apply it to digit classification and face recognition and test it on standard benchmarks.

I caught this one thanks to the Linkedin group (that has more than 1066 members now) Jerome Gilles has written the bregman cookbook toolbox for l1 minimization, from the page:

The Bregman Cookbook

The Bregman Cookbook is a collection of Matlab functions which use the different Bregman Iteration methods to solve some particular algorithms based on sparsity via L1-norm minimization. Except some generalities, the Bregman Cookbook is not devoted to the theoretical aspects of Bregman iteration methods but is more focused on their numerical implementations.Feel free to contact me if you need some help, report a bug or any other suggestions.If you want to contribute to the Bregman Cookbook, you can send me your code + a LateX file containing explanations on the numerical aspects of your algorithm (eventually with some references to specific publications).

The code is here and featured in the big picture sparse signal recovery section.

Dual-Level Compressed Aggregation: Recovering Fields of Physical Quantities from Incomplete Sensory Data by Liu Xiang, Jun Luo, Chenwei Deng, Athanasios V. Vasilakos, Weisi Lin. The abstract reads:

Although wireless sensor networks (WSNs) are powerful in monitoring physical events, the data collected from a WSN are almost always incomplete if the surveyed physical event spreads over a wide area. The reason for this incompleteness is twofold: i) insufficient network coverage and ii) data aggregation for energy saving. Whereas the existing recovery schemes only tackle the second aspect, we develop Dual-lEvel Compressed Aggregation (DECA) as a novel framework to address both aspects. Specifically, DECA allows a high fidelity recovery of a widespread event, under the situations that the WSN only sparsely covers the event area and that an in-network data aggregation is applied for traffic reduction. Exploiting both the low-rank nature of real-world events and the redundancy in sensory data, DECA combines matrix completion with a fine-tuned compressed sensing technique to conduct a dual-level reconstruction process. We demonstrate that DECA can recover a widespread event with less than 5% of the data, with respect to the dimension of the event, being collected. Performance evaluation based on both synthetic and real data sets confirms the recovery fidelity and energy efficiency of our DECA framework.

Sensitivity of the Random Demodulation Framework to Filter Tolerances by Pawel J. Pankiewicz, Thomas Arildsen, Torben Larsen. The abstract reads:

The aim of the present paper is to demonstrate the impact of low-pass filter non-idealities on compressed sensing signal reconstruction in the random demodulator (RD) architecture. The random demodulator is a compressed sensing (CS) acquisition scheme capable of acquiring signals in continuous time. One of the main advantages of the system is the possibility to use off-the-shelf components to implement this subNyquist framework. Low-pass filtering plays an important role in the RD analog acquisition process, which needs to be modeled carefully in the digital part of the compressive sensing reconstruction. Having a complete model of the analog front-end, CS algorithms conduct almost perfect reconstruction taking far less samples than for traditional Nyquist-rate sampling. This paper investigates reconstruction sensitivity to distortion in the impulse response of the low-pass filter caused by passive component value fluctuations. The authors simulate common CS recovery algorithms and show that the worst-case performance degradation due to filter component tolerances can be substantial, which requires special attention when designing reconstruction algorithms for RD.

Compressive Topology Identification of Interconnected Dynamic Systems via Clustered Orthogonal Matching Pursuit by Borhan M. Sanandaji, Tyrone L. Vincent, and Michael B. Wakin. The abstract reads:

In this paper, we consider topology identification of large-scale interconnected dynamical systems. The system topology under study has the structure of a directed graph. Each edge of the directed network graph represents a Finite Impulse Response (FIR) filter with a possible transport delay. Each node is a summer, whose inputs are the signals from the incoming edges, while the output of the summer is sent to outgoing edges. Edges of the graph can be of different unknown orders and delays. Both the graph topology and the FIR filters and delays that make up the edges are unknown. We aim to do the topology identification from the smallest possible number of node observations when there is limited data available and for this reason, we call this problem Compressive Topology Identification (CTI). Inspired by Compressive Sensing (CS) which is a recent paradigm in signal processing for sparse signal recovery, we show that in cases where network interconnections are suitably sparse (i.e., the network contains sufficiently few links), it is possible to perfectly identify the network topology along with the filter orders and delays from small numbers of node observations, even though this leaves an apparently illconditioned identification problem. The main technical novelty of our approach is in casting the identification problem as the recovery of a clustered-sparse signal z 2 RN from the measurements b = Az 2 R M with M \lt N, where the measurement matrix A is a block-concatenation of Toeplitz matrices. To this end, we introduce a greedy algorithm called Clustered Orthogonal Matching Pursuit (COMP) that tackles the problem of recovering clustered-sparse signals from few measurements. In a clustered-sparse model, in contrast to block-sparse models, there is no prior knowledge of the locations or the sizes of the clusters. We discuss the COMP algorithm and support our discussions with simulations.

Compressive System Identification of LTI and LTV ARX Models by Borhan M. Sanandaji, Tyrone L. Vincent, and Michael B. Wakin, Roland Toth, and Kameshwar Poolla. The abstract reads:

In this paper, we consider identifying Auto Regressive with eXternal input (ARX) models for both Linear Time-Invariant (LTI) and Linear Time-Variant (LTV) systems. We aim at doing the identification from the smallest possible number of observations. This is inspired by the field of Compressive Sensing (CS), and for this reason, we call this problem Compressive System Identification (CSI). In the case of LTI ARX systems, a system with a large number of inputs and unknown input delays on each channel can require a model structure with a large number of parameters, unless input delay estimation is performed. Since the complexity of input delay estimation increases exponentially in the number of inputs, this can be difficult for high dimensional systems. We show that in cases where the LTI system has possibly many inputs with different unknown delays, simultaneous ARX identification and input delay estimation is possible from few observations, even though this leaves an apparently illconditioned identification problem. We discuss identification guarantees and support our proposed method with simulations. We also consider identifying LTV ARX models. In particular, we consider systems with parameters that change only at a few time instants in a piecewise-constant manner where neither the change moments nor the number of changes is known a priori. The main technical novelty of our approach is in casting the identification problem as recovery of a block-sparse signal from an underdetermined set of linear equations. We suggest a random sampling approach for LTV identification, address the issue of identifiability and again support our approach with illustrative simulations.

A DoD report featuring a paper behind a paywall: Theory and Practice of Compressed Sensing in Communications and Airborne Networking by Stella N. Batalam. The abstract reads:

We consider the problem of compressed sensing and propose new deterministic constructions of compressive sampling matrices based on finite-geometry generalized polygons. For the noiseless measurements case, we develop a novel recovery algorithm for strictly sparse signals that utilizes the geometry properties of generalized polygons and exhibits complexity linear in the sparsity value. In the presence of measurement noise, recovery of the generalized-polygon sampled signals can be carried out most effectively using a belief propagation algorithm. Experimental studies included in this report illustrate our theoretical developments.

The paper is here.

Orthonormal Expansion `1-Minimization Algorithms for Compressed Sensing by Zai Yang, Cishen Zhang, Jun Deng, and Wenmiao Lu. The abstract reads:

Compressed sensing aims at reconstructing sparse signals from significantly reduced number of samples, and a popular reconstruction approach is `1-norm minimization. In this correspondence, a method called orthonormal expansion is presented to reformulate the basis pursuit problem for noiseless compressed sensing. Two algorithms are proposed based on convex optimization: one exactly solves the problem and the other is a relaxed version of the first one. The latter can be considered as a modified iterative soft thresholding algorithm and is easy to implement. Numerical simulation shows that, in dealing with noise-free measurements of sparse signals, the relaxed version is accurate, fast and competitive to the recent state-of-the-art algorithms. Its practical application is demonstrated in a more general case where signals of interest are approximately sparse and measurements are contaminated with noise.

Experimental verification of compressive reconstruction of correlation functions in Ambiguity space by Lei Tian, Justin Lee, Se Baek Oh, George Barbastathis. The abstract reads:

Phase space tomography estimates correlation functions entirely from multiple snapshots in the evolution of the system, rather than traditional interferometric methods requiring measurement of multiple two-point correlations. However, as in every tomographic formulation, undersampling poses a severe limitation. In the context of quantum correlation function measurements, a new theory utilizing compressive sensing was recently established [D. Gross et al. Phys. Rev. Lett. 105, 150401 (2010), M. Cramer et al. Nat. Comm. 1, 149 (2010)] whereby both measurement and post-processing dimensionality are reduced without giving up reconstruction fidelity. Here we present the first, to our knowledge, experimental demonstration of compressive reconstruction of the classical optical correlation function, i.e. the mutual intensity function which is of course the analogue to a conservative quantum state. We show that using explicitly physics-based assumptions we obtain substantial quantitative improvements in the reconstruction.

Interference Mitigation in Large Random Wireless Networks by Matthew Aldridge

A central problem in the operation of large wireless networks is how to deal with interference -- the unwanted signals being sent by transmitters that a receiver is not interested in. This thesis looks at ways of combating such interference.In Chapters 1 and 2, we outline the necessary information and communication theory background, including the concept of capacity. We also include an overview of a new set of schemes for dealing with interference known as interference alignment, paying special attention to a channel-state-based strategy called ergodic interference alignment.In Chapter 3, we consider the operation of large regular and random networks by treating interference as background noise. We consider the local performance of a single node, and the global performance of a very large network.In Chapter 4, we use ergodic interference alignment to derive the asymptotic sum-capacity of large random dense networks. These networks are derived from a physical model of node placement where signal strength decays over the distance between transmitters and receivers. (See also arXiv:1002.0235 and arXiv:0907.5165.)In Chapter 5, we look at methods of reducing the long time delays incurred by ergodic interference alignment. We analyse the tradeoff between reducing delay and lowering the communication rate. (See also arXiv:1004.0208.)In Chapter 6, we outline a problem that is equivalent to the problem of pooled group testing for defective items. We then present some new work that uses information theoretic techniques to attack group testing. We introduce for the first time the concept of the group testing channel, which allows for modelling of a wide range of statistical error models for testing. We derive new results on the number of tests required to accurately detect defective items, including when using sequential `adaptive' tests.

No comments:

Post a Comment